Hila

Vianai Systems · Product Design

Conversational AI for enterprise financial analysis.

The Insight

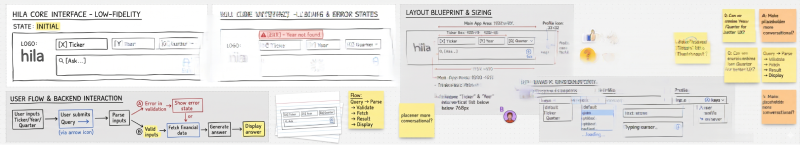

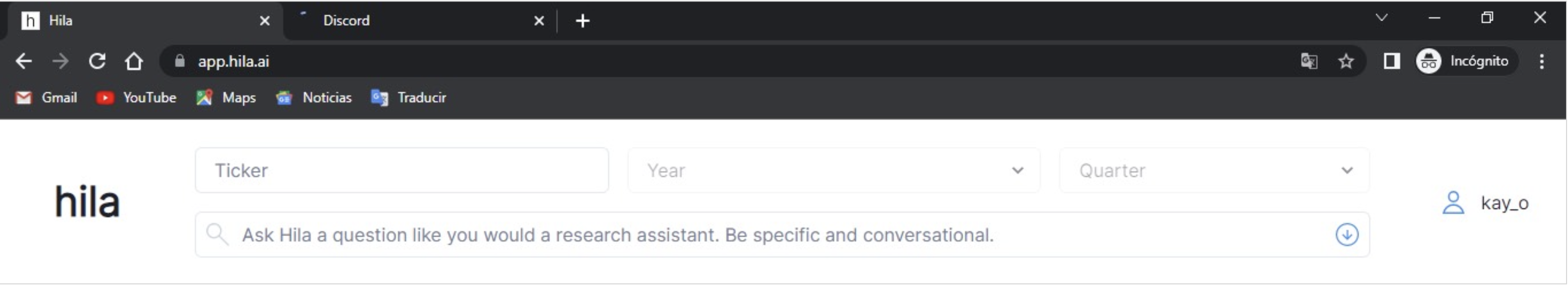

In 2022, while everyone asked what AI could write, we asked what it could help people think — specifically, with financial data. We weren't a product team with a roadmap. We were five people with a hypothesis, a Discord server, and a willingness to find out if we were wrong quickly. We launched a narrow public beta: enter a ticker, ask a question, get answers from earnings transcripts.

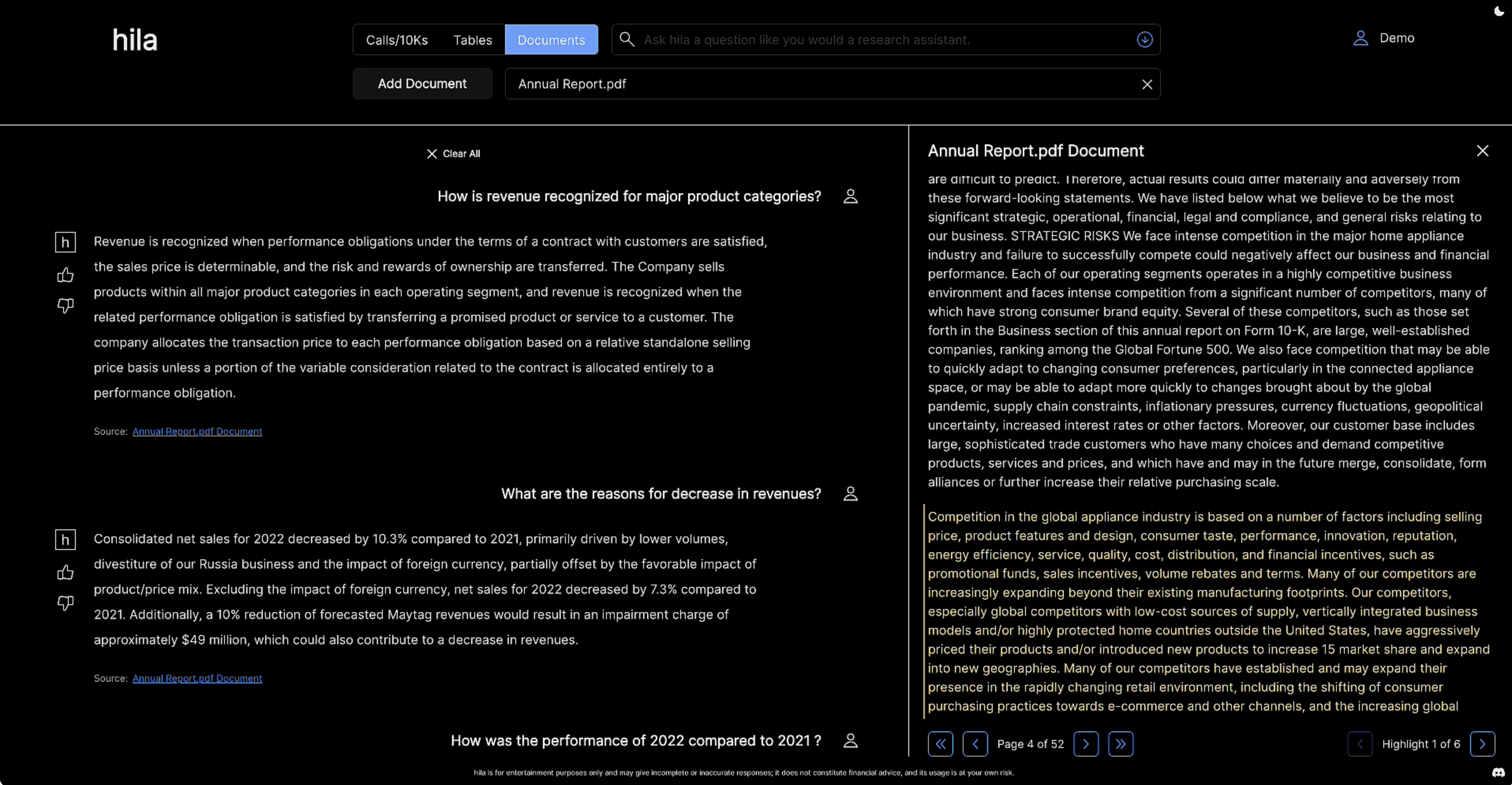

Users didn't just want answers. They wanted to verify them. That signal reshaped everything. It gave us a design philosophy: Show your work.

The Pivot: Accuracy vs Citations

We initially explored a "Perplexity-style" interface, using inline footnotes to link specific sentences to data sources.

The Prototype Failed for Two Reasons:

- Technical Synthesis: Since Hila’s answers were a synthesis of multiple data points (not just direct quotes), the citations felt forced and often inaccurate.

- Interaction Friction: Users found the interface fragmented. They had to hunt for tiny numbers and click multiple times to verify a single report.

I discarded the citations in favor of a centralized Reasoning Module. This allowed us to show the logic of the synthesis in a single click, rather than scattering fragments of data across the text.

The Trust System

I designed three interlocking transparency layers:

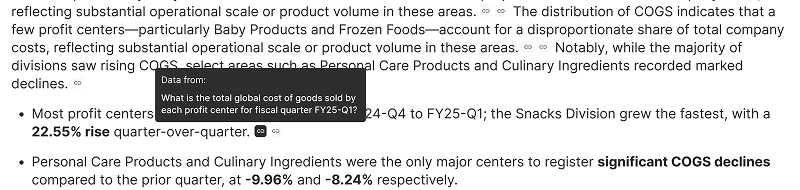

Reasoning Module

Exposed the data retrieved and calculations behind every answer. In 18% of sessions, users who opened it immediately sent a follow-up that corrected the calculation. Not reassurance-seeking. Genuine error-catching.

View Code

Surfaced the raw SQL for technically fluent users. Together with reasoning, it covered two distinct trust needs: how did you calculate this and exactly what data did you use.

Together, these two features addressed two distinct dimensions of trust. One was about process. The other was about source. We stopped thinking of them as features and started thinking of them as a trust system.

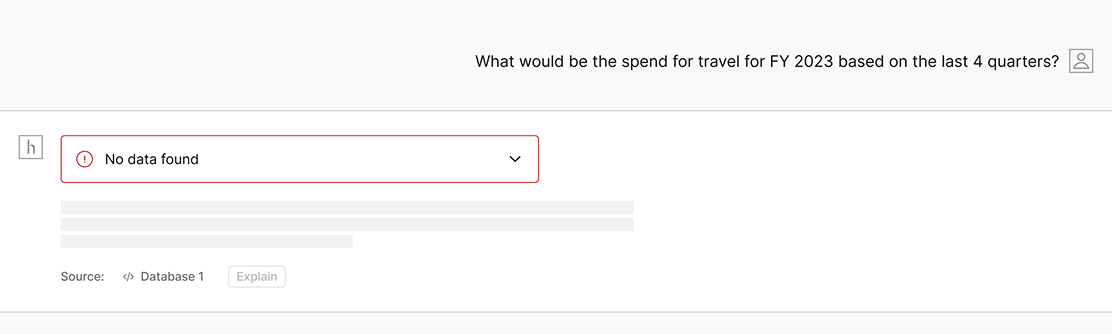

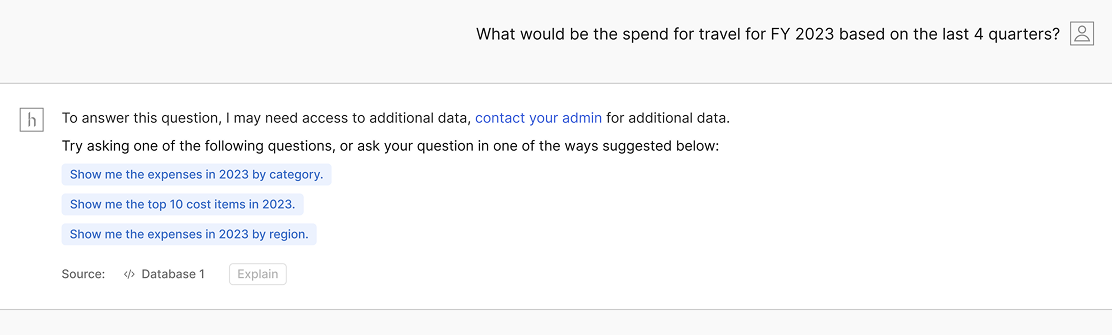

Error Redesign

The original error states used red alerts and dead ends. Users read them as system failure and left. I redesigned errors as conversational recovery moments: here's why I can't answer this, here's what to try instead. Bounce rate on error states dropped 22%. We initially followed standard UI patterns: red for errors, assuming users wanted clear, immediate feedback on system limitations. In reality, red felt like a 'stop sign' in a conversation. It killed the momentum and eroded user confidence.

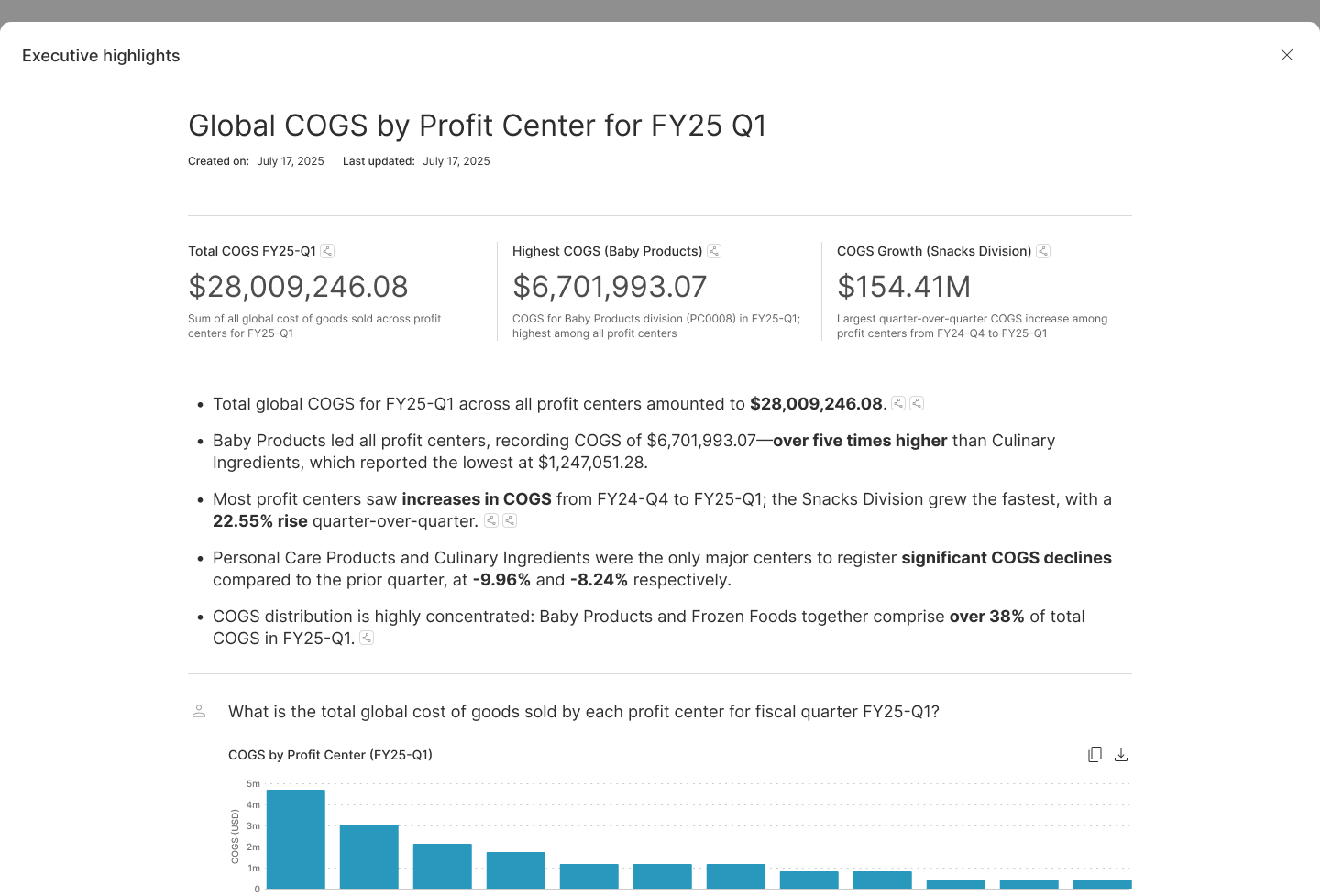

Deep Analysis

Complex queries were failing 17% of the time. I designed — and named — Deep Analysis: a mode that decomposed hard questions into targeted sub-questions, answered each, then reassembled a unified report. Users could edit the breakdown questions to refine the output.

- Failure rate: 17% → 2%

- Users accepted a 15–30 second wait with no friction

- Qualitative shift: from frustration to "it shows its work"

Scale

Vianai pivoted to make hila its flagship enterprise product. The team grew from 5 to 50. The trust problem turned out to be what enterprise was willing to pay for.

What Didn't Ship

I prototyped a conversational dataviz layer — users talking directly to their charts via natural language, powered by ECharts and a lightweight LLM. It worked. Leadership shelved it as premature, then reopened it as future-state vision. Right call, right outcome.

What Users Said

“Connect it to your data sources and it’ll just start answering your questions. After a few thumbs up/down it’ll get it right. Almost magic.”

— Boris Evelson, VP, Forrester Research

“I can rapidly search 10-Ks and earnings calls to find anything related to my theses... quickly, without wasting time skimming irrelevant topics.”

— Mike Ostroff, Investment Analyst, Maverick Capital

Impact

- 0 to 4,000 Users Rapid adoption within the first 4 months of the public beta.

- $1M ARR Achieved within the first year by converting trust into enterprise contracts.

- 17% → 2% Failure Rate De-risked complex queries by introducing 'Deep Analysis,' turning a technical roadblock into a core product differentiator.

Reflection

Hila taught me that the best AI products feel effortless not because the AI is sophisticated — but because the design earns trust. We didn't win by building the most impressive AI. We won by building the most trustworthy one.